5 Questions with Michael Atkin

1. Tell us about yourself.

I have a strange and sordid history. I’ve been an analyst and advocate for data management since 1985. I started with publishers–the owners of intellectual property–as they discovered concepts associated with the principles of data management. After publishing, I concentrated my efforts on the financial industry, learning how data goes from a “simple attribute” to a “contextual business concept.”

I helped to create the EDM Council as the big banks started to recognize data as the fuel that drove their business processes. This is when I realized that most entities weren’t really dealing with the underlying challenges of data management: they were just tactically repairing data problems when required.

I was actively involved in the aftermath of the financial crisis. I served on several regulatory advisory committees. I was the two-term chairman of the Data Committee at U.S. Treasury. I served on the Derivatives Advisory Committee for the CFTC. And I participated in the Financial Stability Board’s activities on data standards and legal entity identification. It has been quite a ride.

2. What are you working on?

I’m currently involved in two related activities. The first is the launch of the Enterprise Knowledge Graph Foundation. The Foundation is a non-profit organization dedicated to the adoption of web standards. Our goal is to help build the knowledge graph market and to support the adoption of best practices for sustainable operations. This is just a continuation of the work that I have been involved in for the past three decades.

Much of this work is about advocacy and how to best position the knowledge graph value proposition to executive stakeholders. One of the big problems I see is the amount of time we spend describing how semantic technology works rather than focusing on what it delivers. We have an opportunity to shift that narrative. I’m also working on the development of a capability and maturity model for sustainable operations. It might be best to think about this as an implementation playbook for EKG practitioners.

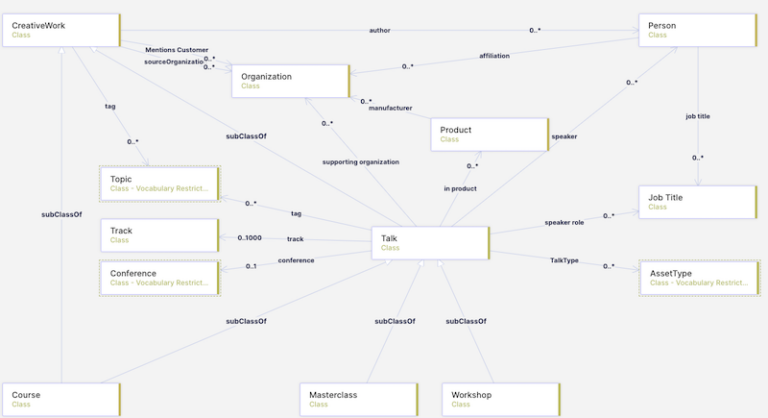

In addition to the Foundation, I’m a principal in agnos.ai – a London-based consulting firm focused on helping clients implement semantic technology. Agnos are a group of financial industry practitioners, data architects and knowledge graph engineers who design and build operational knowledge graphs for clients. I focus on strategic marketing and help by advising clients on the principles and practices of data management.

3. How did you become involved in KGs?

Knowledge graph technology is a natural extension of the work I have been doing over the past three decades. I’ve been quite fortunate in that I have watched the industry move through a couple of important transitions. At first, it was technology-driven. Scores of developments changed the way we distribute and integrate data. Then came the financial crisis and the data challenges associated with systemic risk. This led to the rise of data governance and helped embed the principles of data management into organizations. Both developments were important, but neither data processing nor data governance solved the problem of data silos and fragmented technology environments.

The activity that brought it together for me was the work I did on the Financial Industry Business Ontology (FIBO). FIBO was started to help the financial industry deal with the challenges of data incongruence–the problems caused by the same words meaning different things and the same thing described using different words. It evolved into a formal ontology based on RDF and OWL.

4. What makes KGs interesting?

The FIBO experience was an awakening, and the financial crisis created the impetus. Because semantic standards and knowledge graph do solve this problem. And that is exciting. Just think about the simplicity for a moment. Knowledge graphs are based on open standards. They allow for the capture and expression of data meaning. They provide freedom from schemas and rigid data structures. They validate data quality based on mathematical axioms. And they are modular and allow for the efficient reuse of data concepts.

In practical terms, the EKG is a content layer that serves to harmonize data from disparate sources across your entire organization. I like to think of the EKG as the Rosetta stone for data – and a mechanism to redirect resources away from data reconciliation and toward more useful business objectives. But it is the solvability message that is the most exciting.

5. What’s something about yourself that others may not know?

I do my best work with a fly rod in my hand and a flask of bourbon in my back pocket. And when I’m not fly fishing, you can find me riding my Trikke along the trails of Rock Creek Park.